https://theconversation.com/health-misinformation-is-rampant-on-social-media-heres-what-it-does-why-it-spreads-and-what-people-can-do-about-it-217059

#Health #misinformation is rampant on social #media – here’s what it does, why it spreads and what people can do about it

Published: December 13, 2023

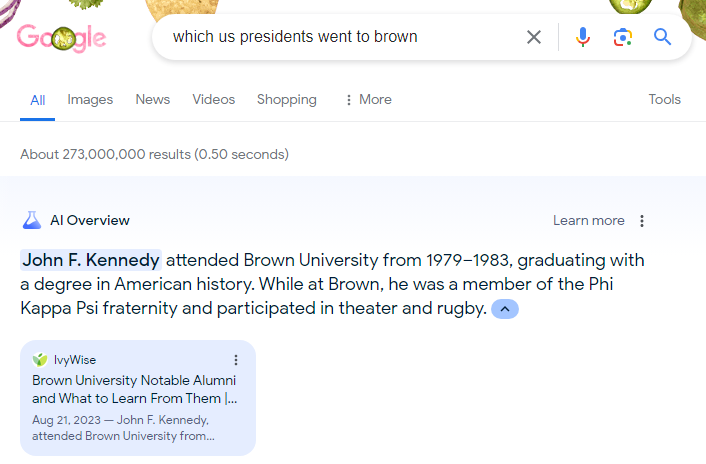

Below are some steps that #consumers can take to identify and prevent health misinformation spread:

Check the source. Determine the credibility of the health information by checking if the source is a reputable organization or agency such as the World Health Organization, the National Institutes of Health or the Centers for Disease Control and Prevention. Other credible sources include an established medical or scientific institution or a peer-reviewed study in an academic journal. Be cautious of information that comes from unknown or biased sources.

Examine author credentials. Look for qualifications, expertise and relevant professional affiliations for the author or authors presenting the information. Be wary if author information is missing or difficult to verify.

Pay attention to the date. Scientific knowledge by design is meant to evolve as new evidence emerges. Outdated information may not be the most accurate. Look for recent data and updates that contextualize findings within the broader field.

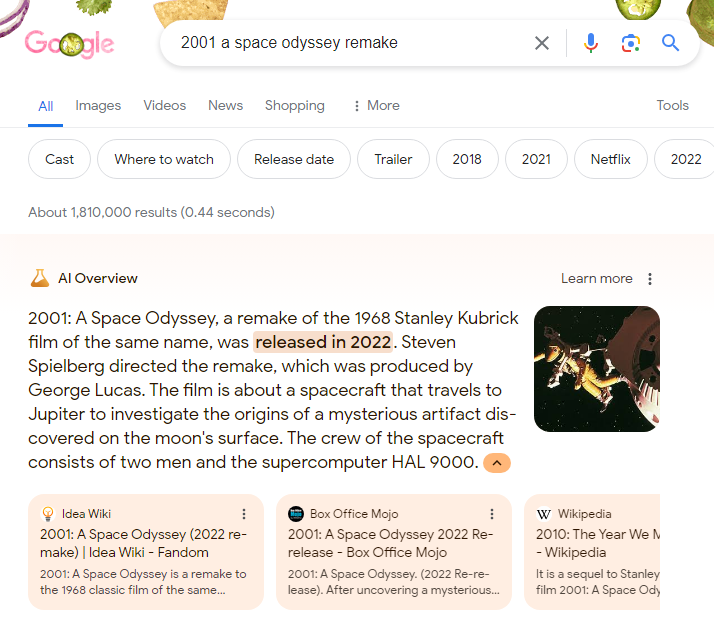

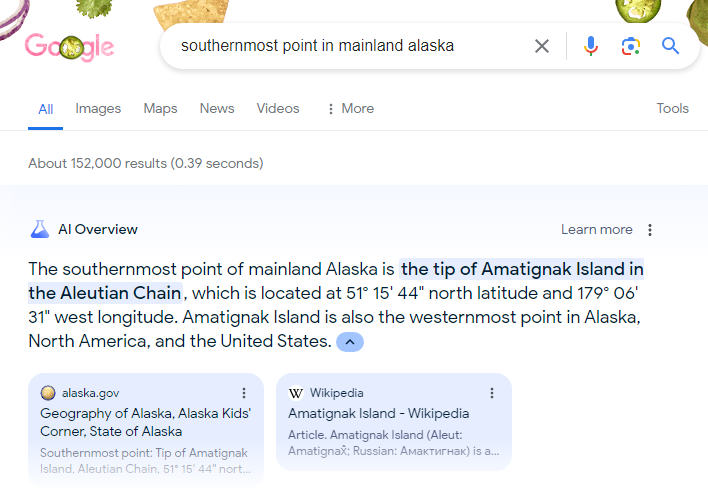

Cross-reference to determine scientific consensus. Cross-reference information across multiple reliable sources. Strong consensus across experts and multiple scientific studies supports the validity of health information. If a health claim on social media contradicts widely accepted scientific consensus and stems from unknown or unreputable sources, it is likely unreliable.

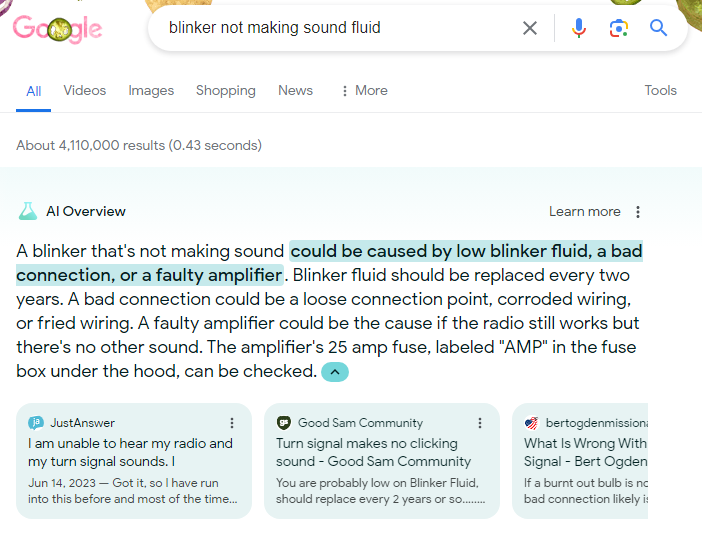

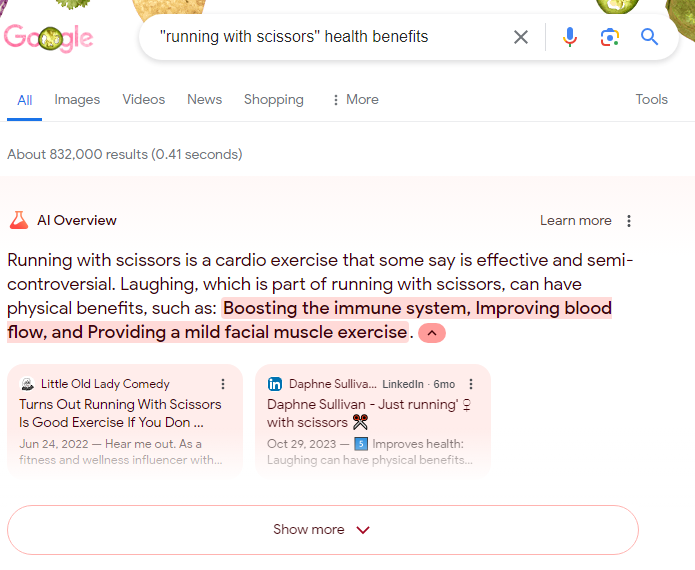

Question sensational claims. Misleading health information often uses sensational language designed to provoke strong emotions to grab attention. Phrases like “miracle cure,” “secret remedy” or “guaranteed results” may signal exaggeration. Be alert for potential conflicts of interest and sponsored content.

Weigh scientific evidence over individual anecdotes. Prioritize information grounded in scientific studies that have undergone rigorous research methods, such as randomized controlled trials, peer review and validation. When done well with representative samples, the scientific process provides a reliable foundation for health recommendations compared to individual anecdotes. Though personal stories can be compelling, they should not be the sole basis for health decisions.

Talk with a health care professional. If health information is confusing or contradictory, seek guidance from trusted health care providers who can offer personalized advice based on their expertise and individual health needs.

When in doubt, don’t share. Sharing health claims without validity or verification contributes to misinformation spread and preventable harm."...